Data collection methods have evolved dramatically – especially with the ability to collect big data. The removal of human error by quantitatively logging information for statistical analysis improves the validity of the data collected, which intimates that whatever the data is used for will be more reliable. However, cognitive bias considerations still remain in the analysis of the data, which can call into question the utility of the recommendations formed from the evaluated data. What are they most common ones and how do we start tackling them?

Confirmation Bias

Confirmation bias refers to the need to prove a hypothesis and therefore to lean heavily on data that might lead this way. Confirmation bias acts to skew results in that the analysed data doesn’t actually represent the full picture of the scenario. For example, a data collection may want to prove that Twitter users were more engaged with a TV show while it was on air – and may neglect to take into account that the greater cumulative engagement occurred in the days after viewers had had a chance to digest the episode. So recommendations could result in companies producing show-related online materials at the wrong time. One of the most galling examples of confirmation bias occurred after the 2016 US Presidential election, where polls were gathered based on a Clinton win, ignoring evidence that might prove otherwise.

Availability Bias

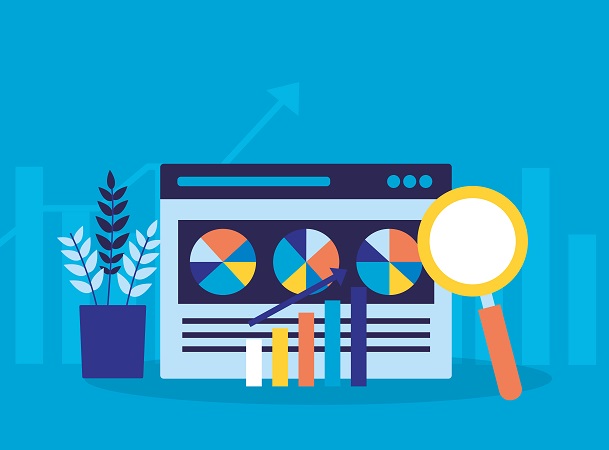

The availability heuristic is just one of a number of phenomena that affect decision-making in daily life, that most people are unaware is even taking effect. Essentially, availability bias refers to the way in which people make decisions based only on information readily available to them. For example, a data collection may discover that respondents spend time looking at a website’s blog – and will use this information to develop the blog in order to convert to a sale or returning customer. However, the availability bias may cause other factors to be neglected due to the information that the blog is successful being the only piece relied on. For instance, the blog could be successful but could create very little engagement, meaning solely developing the blog would create no conversions. Value Walk’s article on behavioural finance helping stock market investors includes the image below, which outlines the availability heuristic in simple terms – and shows how the perspective needs to be shifted to take into account all the information available. The blog would be the small yellow circle, and the rest of the website would be the larger blue circle in the example.

Source: JamesClear.com

Selection Bias

Selection bias refers to the sample the data has been collected from being unrepresentative of people on the whole. Imagine a console game has collected data on how long players spend on the game and then begin to use this in their game development. The data only looks at existing users, and doesn’t take into account factors that might convert a non-user to a fan of the game. For example, a survey found that Xbox gamers were overestimating the prevalence of the “red ring of death” console fault due to the likelihood of those who had experienced it to complete the survey.

Confounding Variables

One of the most dangerous biases results when a correlative relationship between two variables is actually only true when combined with an overlooked confounding variable. Confounding variables cannot be separated from the variables that lead to the correlation. For example, a data collection may discover that a commercial for a children’s theme park that airs during prime time on a children’s channel, which is broadcasting a show about the theme park itself leads to website check-ins. As the scientist cannot state empirically that it is either the commercial or the TV show itself leading to the higher rate of check-ins, the data would be impacted by a confounding variable. Ensure that all data collected can prove a relationship between two variables without being influenced by anything external.

Data collection can be time-consuming and unruly – but completing it successfully can pay dividends for a business, especially with the impact of big data. Biases can be mitigated against to ensure that the statistical recommendations have a low margin of error.

![7 data-driven ways to optimize your online store for mobile [Infographic]](https://crayondata.ai/wp-content/uploads/2019/11/optimize-1.jpg)

![Top tips and tricks to improving your customer experience [Infographic]](https://crayondata.ai/wp-content/uploads/2019/01/customer-journey-1.jpg)